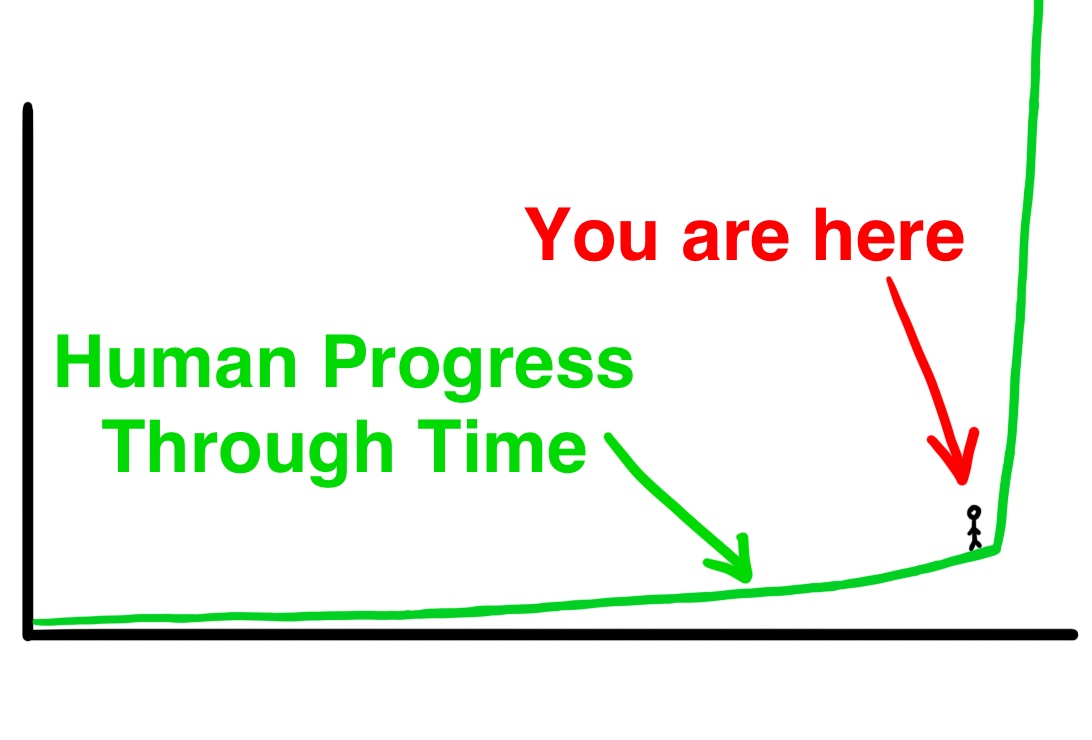

In an annual end-of-year tradition, Radical Ventures Partner Rob Toews shares 10 predictions for the world of AI in 2024 in his latest Forbes column. Rob makes some bold predictions, including Nvidia becoming a cloud provider, alternative AI architectures to the transformer gaining adoption, open models continuing to lag closed models, and Stability AI shutting down.

As reviewed in his recent retrospective, 8 out of Rob’s 10 AI predictions for 2023 came true, including profound transformations in search, the emergence of “LLMOps”, and significant progress in humanoid robots.

Here are the highlights of Rob’s 10 AI for 2024:

1) Nvidia will dramatically ramp up its efforts to become a cloud provider.

“With the cloud providers looking to move down the technology stack to the silicon layer in order to capture more value, don’t be surprised to see Nvidia move in the opposite direction: offering its own cloud services and operating its own data centers in order to reduce its traditional reliance on the cloud companies for distribution. Nvidia has already begun exploring this path, rolling out a new cloud service called DGX Cloud earlier this year. We predict that Nvidia will meaningfully ramp up this strategy next year.”

2) Stability AI will shut down.

“It is one of the AI world’s worst-kept secrets: once-high-flying startup Stability AI has been a slow-motion trainwreck for much of 2023. Next year, we predict the beleaguered company will buckle under the mounting pressure and shut down altogether. Following pressure from investors, Stability has reportedly begun looking for an acquiror, but so far has found little interest.”

3) The terms “large language model” and “LLM” will become less common.

“As AI model types proliferate and as AI becomes increasingly multimodal, this term will become increasingly imprecise and unhelpful. The emergence of multimodal AI has been one of the defining themes in AI in 2023. Many of today’s leading generative AI models incorporate text, images, 3-D, audio, video, music, physical action and more. They are far more than just language models. In 2024, as our models become increasingly multi-dimensional, so too will the terms that we use to describe them.”

4) The most advanced closed models will continue to outperform the most advanced open models by a meaningful margin.

“Today, the highest-performing foundation models (e.g., OpenAI’s GPT-4) are closed-source. But many open-source advocates argue that the performance gap between closed and open models is shrinking and that open models are on track to overtake closed models in performance, perhaps by next year.

We disagree. We predict that the best closed models will continue to meaningfully outperform the best open models in 2024 (and beyond)…

(To be clear: this is not an argument against the merits of open-source AI. It is not an argument that open-source AI will not be important in the world of artificial intelligence going forward. On the contrary, we expect open-source models to play a critical role in the proliferation of AI in the years ahead. However: we predict that the most advanced AI systems, those that push forward the frontiers of what is possible in AI, will continue to be proprietary.)”

5) A number of Fortune 500 companies will create a new C-suite position: Chief AI Officer.

“Artificial intelligence has shot to the top of the priority list for Fortune 500 companies this year, with boards and management teams across industries scrambling to figure out what this powerful new technology means for their businesses.

One tactic that we expect to become more common among large enterprises next year: appointing a ‘Chief AI Officer’ to spearhead the organization’s AI initiatives.

We saw a similar trend play out during the rise of cloud computing a decade ago, with many organizations hiring ‘Chief Cloud Officers’ to help them navigate the strategic implications of the cloud.”

6) An alternative to the transformer architecture will see meaningful adoption.

“Introduced in a seminal 2017 paper out of Google, the transformer architecture is the dominant paradigm in AI technology today. But no technology remains dominant forever. The most recent sub-quadratic architecture—and perhaps the most promising yet—is Mamba. Published just last month by two [Chris] Ré protégés, Mamba has inspired tremendous buzz in the AI research community, with some commentators hailing it as ‘the end of transformers.’

To be clear, we do not expect transformers to go away in 2024. They are a deeply entrenched technology on which the world’s most important AI systems are based. But we do predict that 2024 will be the year in which cutting-edge alternatives to the transformer become viable options for real-world AI use cases.”

7) Strategic investments from cloud providers into AI startups—and the associated accounting implications—will be challenged by regulators.

“A tidal wave of investment capital has flowed from large technology companies into AI startups this year. Such investments can implicate an important gray area in accounting rules.

Say a cloud vendor invests $100 million into an AI startup based on a guarantee that the startup will turn around and spend that $100 million on the cloud vendor’s services. Conceptually, this is not true arms-length revenue for the cloud vendor; the vendor is, in effect, using the investment to artificially transform its own balance sheet cash into revenue.

These types of deals—often referred to as “round-tripping” (since the money goes out and comes right back in)—have raised eyebrows this year among some technology leaders.

Expect the SEC to take a much harder look at round-tripping in AI investments next year—and expect the number and size of such deals to drop dramatically as a result. Given that cloud providers have been one of the largest sources of capital fueling the generative AI boom to date, this could have a material impact on the overall AI fundraising environment in 2024.”

8) The Microsoft/OpenAI relationship will begin to fray.

“As OpenAI looks to aggressively ramp up its enterprise business, it will find itself more and more often competing directly with Microsoft for customers. For its part, Microsoft has plenty of reasons to diversify beyond OpenAI as a supplier of cutting-edge AI models. Microsoft recently announced a deal to partner with OpenAI rival Cohere, for instance. Faced with the exorbitant costs of running OpenAI’s models at scale, Microsoft has also invested in internal AI research efforts on smaller language models like Phi-2.

Bigger picture, as AI becomes ever more powerful, important questions about AI safety, risk, regulation and public accountability will take center stage. The stakes will be high. Given their differing cultures, values and histories, it seems inevitable that the two organizations will diverge in their philosophies and approaches to these issues.”

9) Some of the hype and herd mentality behavior that shifted from crypto to AI in 2023 will shift back to crypto in 2024.

“Crypto is a cyclical industry. It is out of fashion right now, but make no mistake, another big bull run will come—as it did in 2021, and before that in 2017, and before that in 2013. In case you haven’t noticed, after starting the year under $17,000, the price of bitcoin has risen sharply in the past few months, from $25,000 in September to over $40,000 today. A major bitcoin upswing may be in the works, and if it is, plenty of crypto activity and hype will ensue.”

10) At least one U.S. court will rule that generative AI models trained on the internet represent a violation of copyright. The issue will begin working its way up to the U.S. Supreme Court.

“A significant and underappreciated legal risk looms over the entire field of generative artificial intelligence today: the world’s leading generative AI models have been trained on troves of copyrighted content, a fact that could trigger massive liability and transform the economics of the industry…

The answers to these questions will hinge on courts’ interpretation of a key legal concept known as ‘fair use’. Fair use is a well-developed legal doctrine that has been around for centuries. But its application to the nascent field of generative AI creates complex new theoretical questions without clear answers.”

Read Rob’s 2024 predictions in full. Rob writes a regular column for Forbes about the big picture of artificial intelligence.

Radical Reads is edited by Ebin Tomy (Analyst, Radical Ventures)