This week Radical Ventures finished our inaugural AI Founders Master Class, a program aiming to help AI founders create AI companies. Over 200 students and faculty from the Vector Institute for Artificial Intelligence, the Alberta Machine Intelligence Institute, Mila (the Quebec Institute for Machine Intelligence), Stanford University, and Oxford University registered for the four week session.

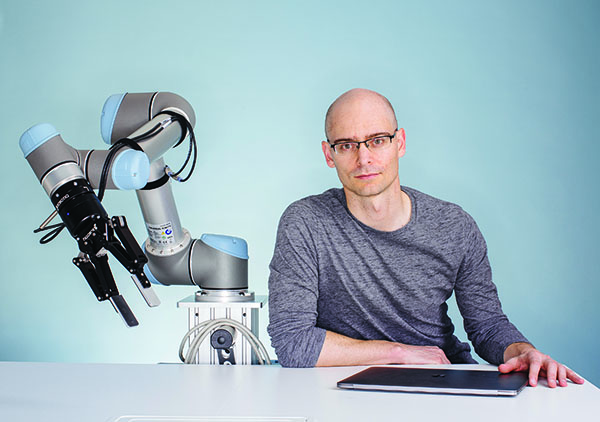

Below, we are sharing an exclusive excerpt from week two of the AI Founders Master Class which focused on foundational questions underpinning every business: identifying the right customer and understanding what problem your business is solving. It featured a conversation between Pieter Abbeel and Cameron Schuler, Chief Commercialization Officer and VP in Industry Innovation of The Vector Institute for Artificial Intelligence. Pieter is a Professor of Electrical Engineering and Computer Science at the University of California, Berkeley, Director of Berkley’s Robot Learning Lab, and co-founder of Radical Ventures portfolio company Covariant – a company that has built a universal AI platform for robotics. The focus of the conversation was on the first principles of starting a business with unique consideration given to what it means to make the leap from AI researcher to AI founder.

The following has been edited for length and clarity.

Cameron Schuler: How did you make the leap from academia to where you are today as a founder?

Pieter Abbeel: At the time, I looked to Sebastian Thrun as a model. He became the world leader in a specific technical area, where he could do it better than anybody else and then the moment came where that thing became the thing around which you could build new products and applications. That was always in the back of my mind as I was a student. The moment took a while, but then it hit. I decided that robotics is ready. The next generation of robotics will be powered by AI and this is my moment to take this step.

CS: How did you know it was the right time for AI in robotics? Was there anything specific that you keyed on that helped you make the decision to go from something in the lab at a university into something commercial?

PA: Keep in mind that deep learning, in its current incarnation, originated in Toronto with Geoffrey Hinton, Alex Krizhevsky, and Ilya Sutskever. It started really breaking through in 2012. But, I would say AI in robotics didn’t break through as quickly, in some ways it is still in the process of breaking through compared to image or speech recognition, or machine translation.

But by 2017 we were building AI-powered robots that could do things well beyond the typical typical robots in car and electronics factories that do repeated motions. It just seemed that the time had come to start working on the transition from blind, repeated motion robots with limited capabilities to robots that see, react to what they see, and do something in the world.

In 2017, there were a handful of companies that reached out to me after seeing our papers and videos. They would ask us to come and do the work we need done in warehouses, factories, hotels, and farms. People were hopeful about bringing something new into their workflows and that made me hopeful that we could indeed go and deliver our products and avoid encountering a lot of friction in the adoption phase.

CS: In terms of how AI is making a difference in robotics, what have been some of the big unsolved problems or areas in need of some attention?

PA: My Co-founder, Peter Xi Chen and I thought a lot about that when we started Covariant. We went into about 200 meetings with potential customers. Pretty much any workflow you can think of that has people working with their hands – we went to those industries to try to find out where to go first. We talked to manufacturing industries, agriculture, construction, hotels, and elderly care.

We quickly realized that nearly every industry was excited to get help from robots. Warehousing clearly stood out as a rapid adopter, interested in solving the picking and placing problem. Even though some problems might look solved because there are videos showing a robot doing something for five minutes, the robot actually needs to function 24/7 and getting there is really hard. Four years ago there was nothing that could do it. So, we backtracked from saying let’s solve something really hard like unpacking and returns processing. We really needed to go to the simplest problem first. It was an entirely different kind of research than we tend to do in academia. But, today picking and placing has been resolved sufficiently to generate real commercial value.

AI News This Week

-

Companies order record number of robots amid labour shortage (Wall Street Journal – subscription required)

Many companies have been hard-pressed to find workers amid labour shortages both in the US and Canada. Warehouses are increasingly turning to robots to help with operations. Radical Ventures portfolio company Covariant provides advanced AI systems to enable robots to work with a wider array of objects. While fears remain that automation will replace humans, the World Economic Forum predicts that automation will result in a net increase of 58 million jobs. As technology takes on tasks that are better suited to automation, more consideration will need to be given to upskilling workers who will be on the front lines of interacting with AI systems.

-

Using machine learning to derive black hole motion from gravitational waves (Science X Network)

A multidisciplinary team has discovered a machine learning-based technique capable of automatically deriving a mathematical model for the motion of binary black holes from raw gravitational wave data. Gravitational waves are produced by cataclysmic events such as the merger of two black holes, which ripple outward as the black holes spiral toward each other and can be detected by installations such as the Laser Interferometer Gravitational-wave Observatory (LIGO). In 2016, the LIGO successfully detected gravitational waves during the merger of two black holes. The event sent ripples throughout the scientific community because it confirmed one of Albert Einstein’s key predictions in his general theory of relativity, and also opened a door to a better understanding of the motion of black holes and other spacetime-warping phenomena. While today’s wave detectors are not sensitive enough and lead to further discoveries, the new ML-based technique could be used for generating even more information.

-

The long search for a brain computer interface that speaks your mind (Wired)

Brain computer interfaces (BCIs) bring us closer to the imaginations of science fiction. But, speech neuroprosthetics have been underway for at least 30 years. These devices have aimed to provide a natural communication channel to individuals who are unable to speak due to physical or neurological impairments. Real-time synthesis of acoustic speech directly from measured neural activity could enable natural conversations and notably improve quality of life. Present-day BCIs approved for human use are slow to go from brain data to producing a sound, making real-time conversation impossible. BCIs currently do not have enough electrodes to get all the data researchers would like. Future tech like Neuralink may speed up this process.

-

AI and art: Liǎn mask uses artificial intelligence to respond to your emotions (Dezeen)

AI can help us explore new approaches to design that are reactive to context and non-physical external stimuli. Central Saint Martins graduate Jann Choy has created an inflatable mask that reacts to your online behaviour. This one-minute video shows it in action. Named Liǎn, the mask is an experimental design piece that aims to “explore the performance of our virtual selves and the relationship between our online and offline personas.”

-

Obituary: Pamela McCorduck, Historian of Artificial Intelligence (The New York Times)

One of AI’s earliest chroniclers, Pamela McCorduck, passed away last month at the age of 80. Despite teaching in the English departments of Carnegie Mellon and Columbia, she spent over 60 years working alongside some of the earliest pioneers of AI. In 1979, when artificial intelligence still remained the stuff of theory, McCorduck authored “Machines Who Think: A Personal Inquiry Into the History and Prospects of Artificial Intelligence,” where she described the founders of a new science who had conceived of expert systems, speech understanding, robotics, general problem-solving and game-playing machines. “AI has pervaded Western intellectual history,” she wrote. “A dream in urgent need of being realized.”

Radical Reads is edited by Ebin Tomy (Analyst, Radical Ventures)