Building on Radical Partner Rob Toews’ exploration of continual learning last spring and our Radical Talks podcast on the topic, Radical Partner Molly Welch maps the evolving research landscape and examines what solving this critical bottleneck could unlock for agentic AI.

At the highest level, building modern AI systems comprises two distinct phases. First, models are trained by ingesting massive datasets and spending weeks adjusting their neural network weights. Then comes inference, in which weights are frozen and models are deployed for real-world use. Once deployed, the model no longer learns: weights are static until the next training run.

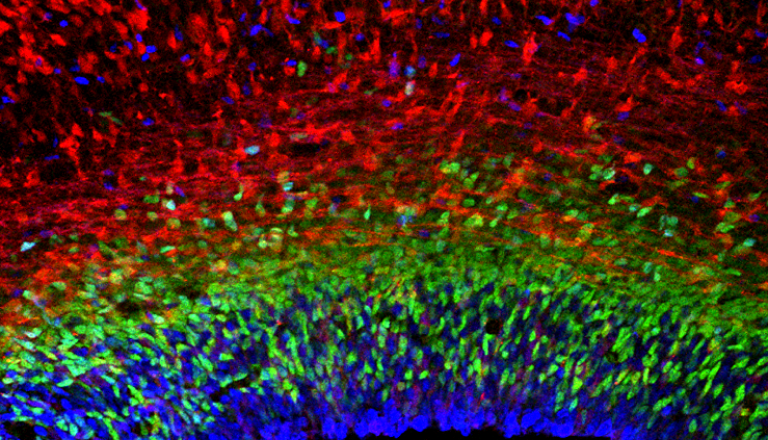

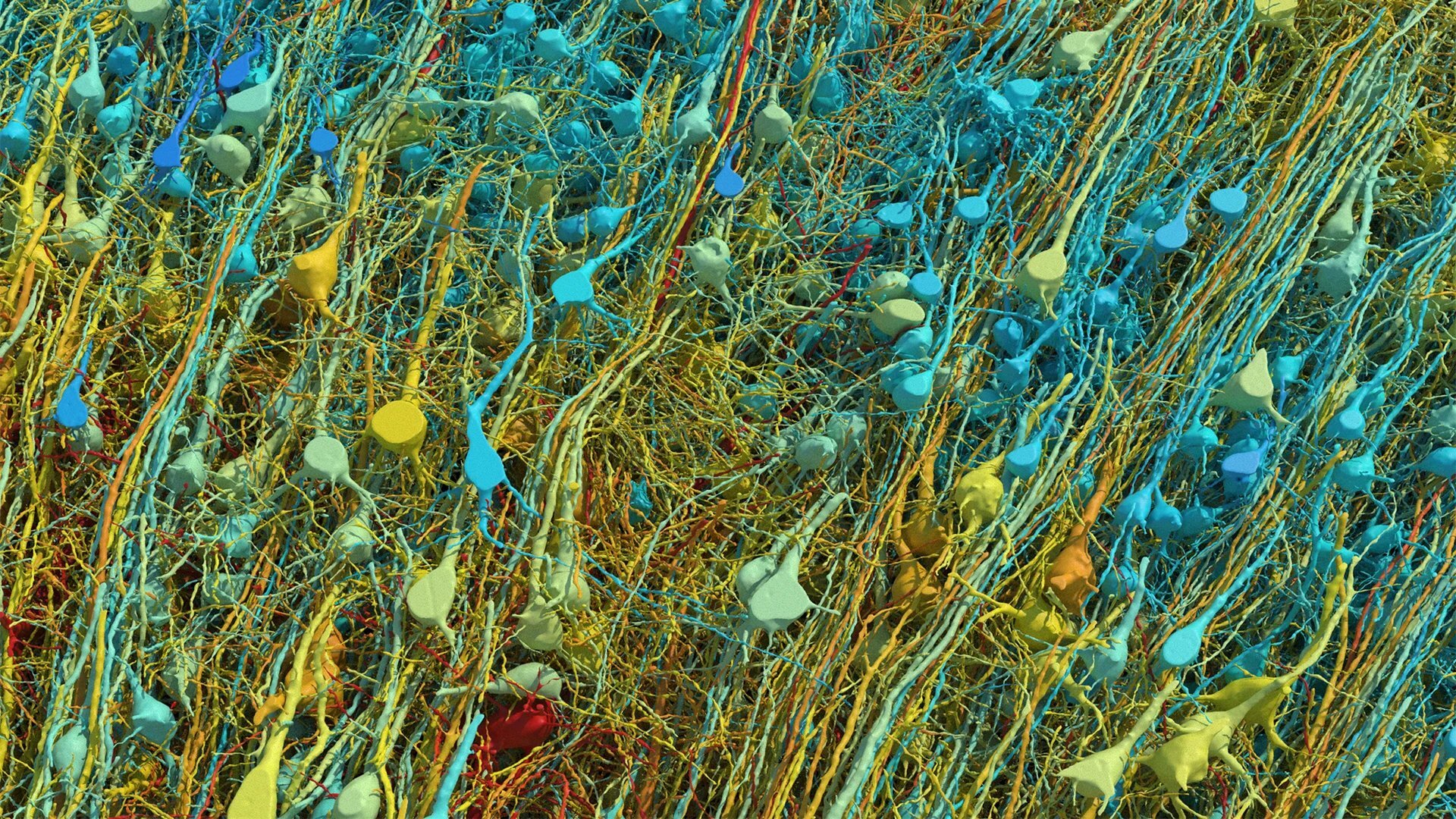

Of course, this is fundamentally different from human cognition. People do not have separate training and inference phases: we learn continuously, seamlessly integrating new information with existing knowledge. Neural networks, by contrast, suffer from what’s known as catastrophic forgetting. Train them on new information, and they overwrite what they learned before.

Continual learning, and attempts to mitigate catastrophic forgetting in particular, have been a long-time focus of the machine learning and deep learning communities. However, over the past year, continual learning has gained newfound urgency as agentic systems are deployed in more contexts. On many long-horizon cognitive tasks, models can be bottlenecked by their “frozen” cognition. Unlike a human employee, you can’t give an agentic system feedback; models also struggle to remember key pieces of information valuable for task performance.

The AI community has developed several approaches to enable models to adapt their behavior at inference without updating their parameters to incorporate new knowledge. Each has significant limitations.

In-context learning leverages the fact that large models can learn from information provided in their context window. Context windows are expanding rapidly, making in-context learning increasingly viable; models can now take in substantially large data corpuses in context. However, in context-learning is ephemeral, disappearing when you close the session. In-context learning is also computationally expensive, linearly expanding key-value cache (KV) memory, limiting throughput and increasing latency for user queries. For enterprise use cases that require persistent, shared knowledge that accumulates over time, in-context learning is insufficient. Performance can also degrade as context grows.

Supervised fine-tuning, or SFT, is a common approach that involves periodically updating a model with new data through additional training runs. However, this process has shown to generalize poorly and lead to severe catastrophic forgetting. Moreover, it’s often not practical: SFT is resource-intensive, time-consuming, and requires specialized expertise. Even organizations with deep pockets struggle to fine-tune models on a regular cadence.

Retrieval-augmented generation (RAG) combined with external memory databases offers another solution. Rather than updating the model’s weights, new information gets stored in a database for the model to retrieve as needed. This works for some use cases, but struggles with scalability. As databases grow, retrieval accuracy suffers and latency increases.

None of these approaches provides a comprehensive solution to the challenge of making models truly adaptive over time.

Research Breakthroughs on the Horizon

The field of continual learning is experiencing renewed energy, driven by both market needs and several promising research directions. Three approaches in particular have generated significant excitement in the research community:

Cartridges: This work, led by Sabri Eyuboglu at Stanford, introduces an offline training approach to continual learning that seeks to mitigate KV cache memory usage challenges. Ebuboglu et al. trained “cartridges,” or compact memory modules that study a user’s context offline, enabling AI systems to respond to queries without re-analyzing full context corpora. The cartridge framework leverages the flexibility of in-context learning with enhanced efficiency, enabling models to load and unload specialized modules for different tasks or domains. It’s an elegant approach with meaningful promise for personalization, but not a free lunch: cartridges are computationally expensive to train initially, even if more lightweight when used.

Self-distillation: This technique allows models to learn from their own outputs in a continuous loop, refining their understanding over time without requiring external supervision. The model essentially becomes its own teacher, using confident predictions to guide ongoing learning. Early results suggest this approach can help models adapt to new data while preserving prior knowledge much more effectively than approaches like SFT.

Test-time discovery: Perhaps the most intriguing development is research from Stanford and NVIDIA that explores how models can discover new patterns and knowledge during inference itself – blurring the boundaries between training and inference. In so-called test time training to discover, models essentially “practice” on test questions as a mechanism for solving harder problems. This method is very promising, but it can only be applied today on problems with continuous rewards, or when the model can be “scored” based on its output, e.g. in mathematics, GPU kernel engineering, and biology. It is not yet proven in problems with binary or sparse rewards or in non-verifiable domains, as in improving outputs to a user’s query about a travel itinerary.

Advances in the scalability of Reinforcement Learning (RL) for long-horizon tasks is making it possible for models to adapt in ways that previously were not possible. We are already seeing the first commercial implementations of this, such as Radical Ventures portfolio company Writer‘s self-evolving models, which periodically fold short-term insights into the model’s weights.

Practically, continual learning has immediate applications across AI systems, whether it be in customer support, sales tools, legal research, financial analysis, or any field where accumulated knowledge adds value. The field of robotics may see the greatest impact, with systems learning in real time from new situations rather than waiting for data collection and retraining cycles – maybe finally making general-purpose robotics viable.

Today’s foundation models are largely interchangeable. But imagine an AI product that truly gets to know you over time. After months of use, switching to a competitor means starting over from scratch, losing all that accumulated understanding. This creates stickiness in a way that today’s AI products cannot replicate.

Does solving continual learning unlock AGI? Perhaps not on its own. AI systems already surpass human capabilities in many domains while still lacking continual learning. And even if we achieve robust continual learning, other challenges remain.

But continual learning does represent one area where human cognition remains distinctly superior to artificial systems. Bridging this gap would unlock new categories of applications and bring us meaningfully closer to AI systems that learn and adapt like biological intelligence.

What’s clear is that the age of static, frozen models is giving way to something more dynamic and adaptive. And it’s about time.

AI News This Week

-

AI Pioneer Fei-Fei Li's World Labs Raises $1 Billion in Funding (Reuters)

Radical Ventures portfolio company World Labs, co-founded by AI pioneer and Radical Scientific Partner Fei-Fei Li, raised $1 billion in a new funding round. Autodesk contributed $200 million and will serve as a strategic adviser, with the two companies collaborating to bring World Labs’ spatial intelligence models into 3D design workflows. The company’s first product, Marble, lets users create editable, downloadable 3D environments from image or text prompts.

-

AI is Indeed Coming – But There is Also Evidence to Allay Investor Fears (The Guardian)

Investors are reassessing the value of companies that rely heavily on selling software or specialist knowledge. The release of new, more powerful AI tools has triggered stock market declines among traditional Software as a Service (SaaS) providers and companies spanning a diversity of sectors, from legal services and logistics to drug distribution and comparison shopping sites. Radical Ventures Partner Aaron Rosenberg argues the impact of AI is still underestimated in the long term, and adoption of groundbreaking models will not be uniform: “History shows a repeated pattern of there being a significant lag between a technology working in a lab and it permeating the wider economy, as well as a chasm between early adopters and the majority of users.”

-

From Cats and Dogs to Erdős: AI Groups Chase Progress Through Maths (FT)

AI labs are increasingly using unsolved mathematical problems to test their systems in the race to build more capable models. Once thought beyond the reach of probabilistic language models (LLMs), math’s demands for precision, abstract reasoning, and logic are now being met by reasoning models that solve problems step by step while self-correcting. Strong mathematical reasoning has also proven commercially valuable, enabling the development of more powerful coding agents. The key bottleneck remains continual learning as models cannot retain and build on prior knowledge or tackle problems that take weeks or years to solve (see our editorial feature, above).

-

Will Self-Driving ‘Robot Labs’ Replace Biologists? Paper Sparks Debate (Nature)

Autonomous labs pairing LLMs with robotics are beginning to outperform human-led research, testing tens of thousands of experimental conditions at scale. These labs perform a closed loop of AI-driven hypothesis generation, robotic experimentation, and result interpretation fed back into the next cycle. Radical Ventures portfolio company Periodic Labs is exploiting this directly, building AI scientists that use autonomous labs to generate proprietary experimental data to create high-temperature superconductors. Radical Ventures portfolio company Intrepid Labs‘ VALIANT platform uses robotics to prepare hundreds of unique drug formulations daily while minimizing material per sample. Key open questions remain around how dexterous robotics can become for complex tasks, how human wet-lab expertise fits into increasingly automated workflows, and what models of shared access will make autonomous labs broadly available to researchers.

-

Research: Evolving Agents via Recursive Skill-Augmented Reinforcement Learning (UNC-Chapel Hill et al)

SKILLRL addresses the continual learning problem (see our editorial feature, above) by distilling raw interaction trajectories into reusable behavioural patterns, with the skill library and agent policy co-evolving recursively during training. Better skills generate better performance, which in turn generates better training data.

Radical Reads is edited by Ebin Tomy (Analyst, Radical Ventures)