Radical Ventures partner Rob Toews has published his latest column in Forbes, about the urgent need for greater collaboration between the neuroscience and artificial intelligence communities. We are featuring the introduction to that article in this week’s Radical Reads. See here for the full article in Forbes. You can find all of Rob’s Forbes columns here.

The most consequential technological race in the history of civilization is underway. The world’s leading companies are investing hundreds of billions of dollars in pursuit of a singular goal: human-level artificial intelligence.

No one has yet succeeded. Not Google, not OpenAI, not Anthropic.

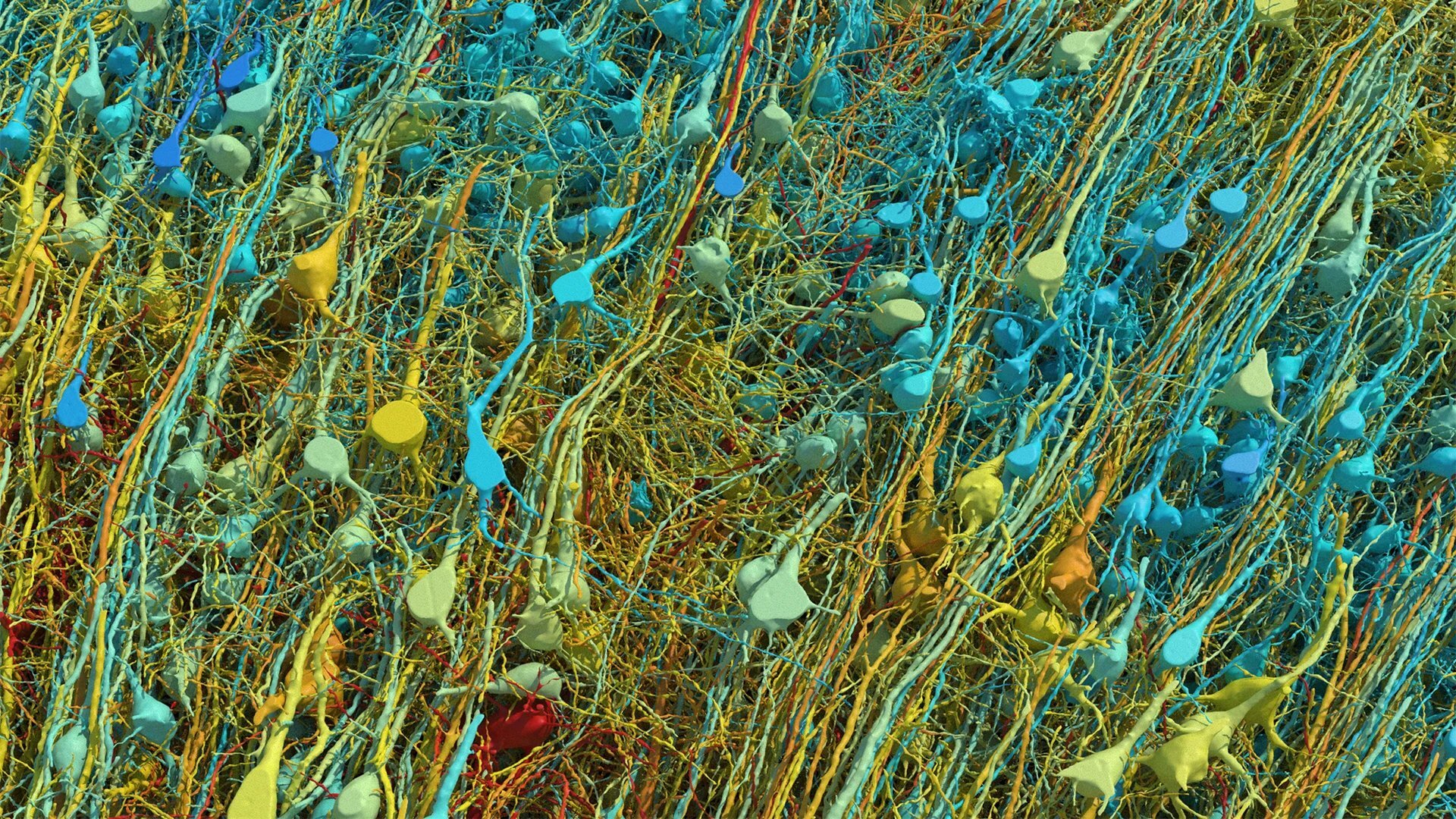

To this day, in the known universe, only one example exists of a system capable of human-level intelligence. That system is the human brain.

It is remarkable, then, how little we still understand about how the human brain works. It is even more remarkable — and nonsensical — how little we are investing to advance that understanding.

The total resources that humanity is devoting to understanding the human brain is disproportionately, almost comically meager compared to the resources being poured into scaling up today’s prevailing AI paradigm.

Last year, four companies alone — Alphabet, Amazon, Meta, Microsoft — spent close to $400 billion on AI infrastructure: the data centers, chips and energy to train and run massive deep learning models. This year, that figure will grow to over $600 billion. By the year 2030, McKinsey estimates that humanity will deploy an incredible $6.7 trillion to build AI data centers.

Let’s compare these figures to the most important brain research effort of 2025.

Last year, the nonprofit organization Astera launched one of the most well-funded brain research initiatives in history, headed by luminary neuroscientist Doris Tsao. The new program made headlines given the unprecedented size of the investment.

As Astera wrote about this effort: “We seek to understand one of the deepest mysteries of science: how the brain produces conscious experience, cognition, and intelligent behavior…Uncovering these principles would transform both neuroscience and technology — revealing the mechanism responsible for generating conscious experience, and at the same time, providing a new framework for AGI.”

The size of the Astera program? $600 million over 10 years. This is ten thousand times — four orders of magnitude — less investment per year than just a few companies will spend on AI data centers this year alone.

The largest initiative ever undertaken to understand the human brain is the U.S. government’s BRAIN Initiative, a multi-agency effort that launched in 2014. The aggregate amount that has been invested in the BRAIN Initiative, over the course of more than a decade, is a mere $3 billion. In 2023, its single biggest year of funding, the BRAIN Initiative topped out at $680 million of investment.

The relative magnitude of these figures is stark. This represents a serious misallocation of resources at a societal level. It also represents a massive opportunity.

Few things are more valuable or highly sought after in today’s world than better understanding the nature of intelligence. After all, it is this pursuit — to better understand intelligence in order to create and monetize digital versions of it — that ultimately motivates the trillions of dollars being spent on AI infrastructure. It is this pursuit that has made Nvidia the most valuable company in the world. It is this pursuit that is rapidly propelling OpenAI and Anthropic to trillion-dollar valuations.

What if the most effective and high-leverage way to advance our understanding of the nature of intelligence — and thus to advance our ability to develop powerful artificial versions of it — is not by spending trillions of dollars and devoting so much ofl the energy and compute in the world to scale today’s (evidently imperfect) AI paradigm, but rather by better understanding, from first principles, the one actual proof point we have of a generally intelligent system: the human brain?

This seems like a sensible, even obvious, thesis to pursue. Based on the way humanity is allocating its resources today, though, it remains a wildly non-consensus view.

How, specifically, could our pursuit of AI be enhanced by better understanding the human brain? What is it that the brain can do that modern AI systems still cannot do?

There are many answers to this question, but let’s focus on three.

Read the full article in Forbes here.

AI News This Week

-

Aspect Biosystems Secures $79-million in Federal Funding to Advance 3-D Tissue Printing Technology (Globe and Mail)

Radical Ventures portfolio company Aspect Biosystems has secured $79 million in Canadian federal funding toward a $280 million multi-year project to expand its capacity for 3-D printing live tissue implants. The company’s technology uses specialized bioprinters to produce synthetic tissues composed of living stem-cell-derived cells and hydrogel polymers, designed to replace or supplement impaired organ functions. Aspect is partnering with Novo Nordisk to develop cellular treatments for Type 1 diabetes, with Aspect leading development, manufacturing, and commercialization.

-

The New Jobs Being Created by AI (WSJ)

AI created 640,000 jobs in the U.S. between 2023 and 2025, according to LinkedIn data, not including data center construction roles. AI-related postings more than doubled as a share of all job listings over that period, from 1.6% to 3.4%. The new roles span a wide range: “head of AI” positions grew 49% over three years, while data annotator roles, often part-time, added 312,000 positions. A growing segment of the annotator workforce consists of domain experts, including researchers with PhDs and practicing physicians, who train models on specialized knowledge that cannot be sourced from the open internet. A survey of 750 CFOs found that AI had essentially no negative effect on employment in 2025.

-

How A.I. Helped One Man (and His Brother) Build a $1.8 Billion Company (NYT)

In 2024, experts predicted that AI would soon make a one-person billion-dollar company possible. A telehealth startup built by a single founder using over a dozen AI tools may be the first example. The company, which sells GLP-1 weight-loss drugs, generated $401 million in its first full year with only two employees and is on track for $1.8 billion in 2026. The founder used LLMs for code and copy, generative models for ad creative, and AI agents for customer service, outsourcing medical infrastructure to telehealth-in-a-box platforms. As AI tools grow more capable and affordable, the playbook of building asset-light, AI-native businesses on top of existing infrastructure is likely to spread.

-

Breakthrough Computer Chip Tech Could Help Meet ‘Monumental Demand’ Driven by AI (Nature)

ASML’s latest extreme ultraviolet lithography system has produced the smallest features ever created in a single step by a commercial chip-patterning machine, etching structures just 8 nanometers wide onto silicon wafers. The upgraded optics enable 2.9 times more transistors per chip than the previous generation. Denser chips are central to the AI infrastructure buildout because they allow data centers to run more computations without proportional increases in power consumption, a binding constraint as training and inference costs scale.

-

Research: Effective Strategies for Asynchronous Software Engineering Agents (CMU)

As AI coding agents tackle larger software projects, coordinating multiple agents on a shared codebase becomes a core challenge. CMU researchers found that giving each agent its own isolated workspace and merging results through structured checkpoints, borrowing directly from how human developer teams collaborate, improved accuracy by up to 27% over single-agent approaches. Simply giving one agent more time didn’t help. The binding constraint was task-delegation quality, suggesting that the infrastructure for coordinating AI agents may matter as much as the quality of the agents themselves.

Radical Reads is edited by Ebin Tomy (Analyst, Radical Ventures)