Beyond groundbreaking research, most of today’s AI advances share one thing in common: they are all venture-backed. State-of-the-art AI systems are fueled by vast troves of data, access to scarce computing resources, and teams of high-priced AI researchers. OpenAI, Cohere, and Anthropic, the startups behind the large language models that helped bring AI to the mainstream over the past year, received multiple rounds of funding from venture capital firms. Google and Meta – whose industrial labs churned out the biggest AI breakthroughs of the last decade – were, at one time, fueled by venture capital. Last year, academic labs and non-profits produced five significant large language models. Venture-funded technology companies produced 25.

The rapid pace of advances is increasing the urgency to ensure that AI systems are developed and deployed safely. Just as venture capital played a critical role in spurring the current AI boom, the investment decisions made in the boardrooms of VC offices today will influence the AI technologies that shape future generations. While there are voices arguing that “trust and safety” measures may impede progress, we believe that the venture capital industry – the underwriters of tomorrow’s innovations – must approach investments in AI with a sense of shared responsibility.

To that end, Radical Ventures, the world’s largest venture fund dedicated exclusively to AI, is releasing an open-source resource aimed at fostering the safe and responsible application of AI. Based on the same resources Radical uses as part of its internal diligence and deliberation processes, Radical’s Responsible AI for Startups (RAIS) framework is now accessible to the public and the broader venture capital community, as a practical tool to help VCs assess early-stage AI companies and technologies.

Inspired by existing literature in the safety and responsibility disciplines, the framework covers three key areas: social and ethical impact, regulatory compliance, and technical risk. RAIS is a risk register, providing a familiar framework for guiding investment considerations of businesses and technologies that may impact society. Individual vulnerabilities, or threats, are given a score based on the likelihood of that threat occuring and the magnitude of the consequence. For the same reason that risk registers are useful – they guide conversation rather than prescribe outcomes – the RAIS framework should support and codify an investment committee’s thinking rather than act as a checklist.

Venture capital is designed to selectively assume risk. When safety risks are identified in RAIS, the framework provides mitigation strategies. Understanding what such mitigation will require may inform an investment, for example, by ensuring adequate funding for the relevant tools and processes. The framework helps investors think through best practices (such as those documented in critical algorithm studies) and apply these recommendations as part of a company’s core business strategy.

When properly designed, strategic diligence tools can increase long-term value and minimize the risk of investing in a company. Each venture fund’s version of the framework will be unique, reflecting their responsible investment principles. In making the framework open source, we hope to encourage other investors to share redacted cases to help build a repository of examples and facilitate adoption. By driving innovation that sustains long-term performance, maximizing opportunities for growth while minimizing risks, a framework for conducting due diligence into responsibility practices in AI companies would benefit the entire investment ecosystem.

In developing the framework, Radical worked closely with the Vector Institute for AI to ensure this framework adheres to the Institute’s AI Trust and Safety Principles, a set of guide rails gathered from across multiple sectors to reflect the values of practitioners in AI ecosystems around the world. As a technology partner, the Vector Institute will also ensure model evaluations are up to date and will provide input on mitigation strategies. Radical is also collaborating with Responsible AI Labs, a coalition of investors and technology executives who recognize the need for a more rigorous approach to tackling the challenges and opportunities of this moment. Radical is a signatory to RIL’s voluntary commitment to responsible AI.

Today, at a workshop facilitated by Andrew Strait of the Ada Lovelace Institute, we will begin more proactively sharing the RAIS framework with venture capital investors, walking through case studies of how such a responsible AI framework can be applied to potential investments with a material impact on society. As we seek to make AI safe for future generations, decision making about what technologies we fund is where the rubber ultimately meets the road.

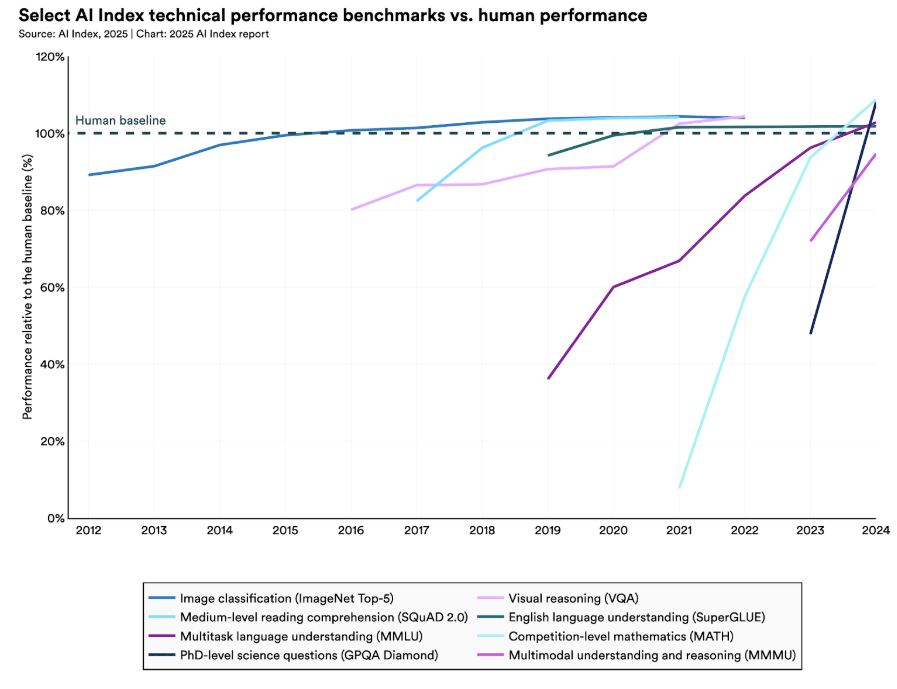

- Models evaluated by Holistic Evaluation of Language Models (HELM), published in Transactions on Machine Learning, p.43 (08/23)

- Sisson, L. (2020, October 21). Venture Capital and Public Purpose. Harvard Kennedy School Belfer Center: Perspectives on Public Purpose for Emerging Technologies.

Radical Reads is edited by Ebin Tomy (Analyst, Radical Ventures)